Note

Go to the end to download the full example code

Compute and visualize ERDS maps#

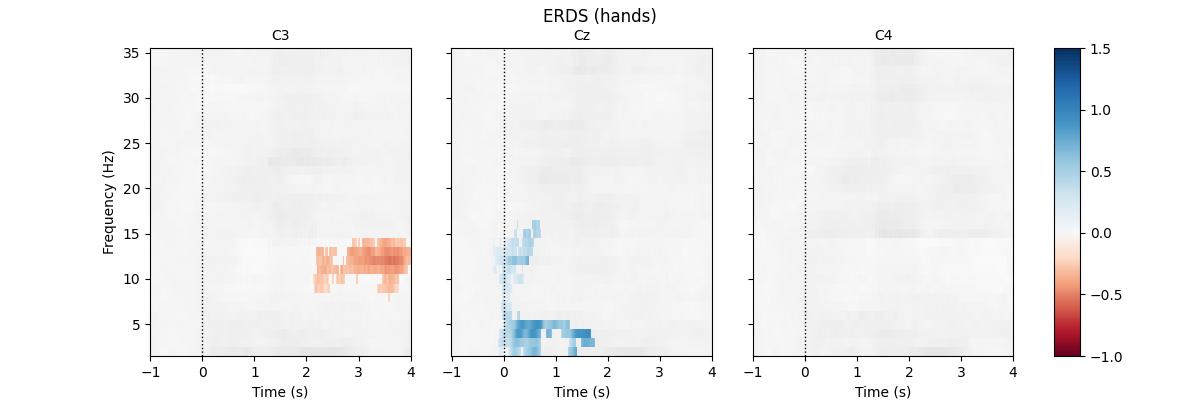

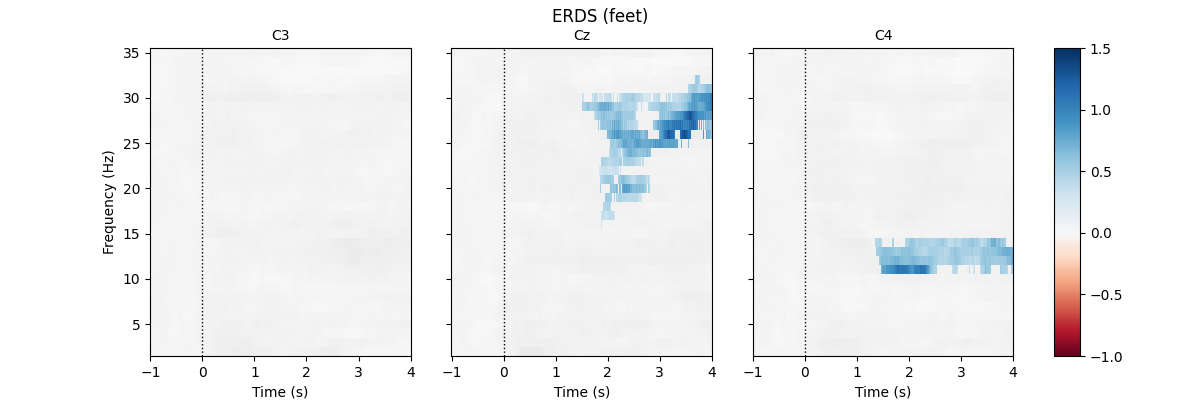

This example calculates and displays ERDS maps of event-related EEG data. ERDS (sometimes also written as ERD/ERS) is short for event-related desynchronization (ERD) and event-related synchronization (ERS) [1]. Conceptually, ERD corresponds to a decrease in power in a specific frequency band relative to a baseline. Similarly, ERS corresponds to an increase in power. An ERDS map is a time/frequency representation of ERD/ERS over a range of frequencies [2]. ERDS maps are also known as ERSP (event-related spectral perturbation) [3].

In this example, we use an EEG BCI data set containing two different motor imagery tasks (imagined hand and feet movement). Our goal is to generate ERDS maps for each of the two tasks.

First, we load the data and create epochs of 5s length. The data set contains multiple channels, but we will only consider C3, Cz, and C4. We compute maps containing frequencies ranging from 2 to 35Hz. We map ERD to red color and ERS to blue color, which is customary in many ERDS publications. Finally, we perform cluster-based permutation tests to estimate significant ERDS values (corrected for multiple comparisons within channels).

# Authors: Clemens Brunner <clemens.brunner@gmail.com>

# Felix Klotzsche <klotzsche@cbs.mpg.de>

#

# License: BSD-3-Clause

As usual, we import everything we need.

import numpy as np

import matplotlib.pyplot as plt

from matplotlib.colors import TwoSlopeNorm

import pandas as pd

import seaborn as sns

import mne

from mne.datasets import eegbci

from mne.io import concatenate_raws, read_raw_edf

from mne.time_frequency import tfr_multitaper

from mne.stats import permutation_cluster_1samp_test as pcluster_test

First, we load and preprocess the data. We use runs 6, 10, and 14 from subject 1 (these runs contains hand and feet motor imagery).

fnames = eegbci.load_data(subject=1, runs=(6, 10, 14))

raw = concatenate_raws([read_raw_edf(f, preload=True) for f in fnames])

raw.rename_channels(lambda x: x.strip('.')) # remove dots from channel names

events, _ = mne.events_from_annotations(raw, event_id=dict(T1=2, T2=3))

Exception ignored in: <_io.FileIO name='/home/circleci/project/mne/data/eegbci_checksums.txt' mode='rb' closefd=True>

Traceback (most recent call last):

File "<decorator-gen-566>", line 12, in load_data

ResourceWarning: unclosed file <_io.BufferedReader name='/home/circleci/project/mne/data/eegbci_checksums.txt'>

Extracting EDF parameters from /home/circleci/mne_data/MNE-eegbci-data/files/eegmmidb/1.0.0/S001/S001R06.edf...

EDF file detected

Setting channel info structure...

Creating raw.info structure...

Reading 0 ... 19999 = 0.000 ... 124.994 secs...

Extracting EDF parameters from /home/circleci/mne_data/MNE-eegbci-data/files/eegmmidb/1.0.0/S001/S001R10.edf...

EDF file detected

Setting channel info structure...

Creating raw.info structure...

Reading 0 ... 19999 = 0.000 ... 124.994 secs...

Extracting EDF parameters from /home/circleci/mne_data/MNE-eegbci-data/files/eegmmidb/1.0.0/S001/S001R14.edf...

EDF file detected

Setting channel info structure...

Creating raw.info structure...

Reading 0 ... 19999 = 0.000 ... 124.994 secs...

Used Annotations descriptions: ['T1', 'T2']

Now we can create 5-second epochs around events of interest.

Not setting metadata

45 matching events found

No baseline correction applied

0 projection items activated

Using data from preloaded Raw for 45 events and 961 original time points ...

0 bad epochs dropped

Here we set suitable values for computing ERDS maps. Note especially the

cnorm variable, which sets up an asymmetric colormap where the middle

color is mapped to zero, even though zero is not the middle value of the

colormap range. This does two things: it ensures that zero values will be

plotted in white (given that below we select the RdBu colormap), and it

makes synchronization and desynchronization look equally prominent in the

plots, even though their extreme values are of different magnitudes.

freqs = np.arange(2, 36) # frequencies from 2-35Hz

vmin, vmax = -1, 1.5 # set min and max ERDS values in plot

baseline = (-1, 0) # baseline interval (in s)

cnorm = TwoSlopeNorm(vmin=vmin, vcenter=0, vmax=vmax) # min, center & max ERDS

kwargs = dict(n_permutations=100, step_down_p=0.05, seed=1,

buffer_size=None, out_type='mask') # for cluster test

Finally, we perform time/frequency decomposition over all epochs.

tfr = tfr_multitaper(epochs, freqs=freqs, n_cycles=freqs, use_fft=True,

return_itc=False, average=False, decim=2)

tfr.crop(tmin, tmax).apply_baseline(baseline, mode="percent")

for event in event_ids:

# select desired epochs for visualization

tfr_ev = tfr[event]

fig, axes = plt.subplots(1, 4, figsize=(12, 4),

gridspec_kw={"width_ratios": [10, 10, 10, 1]})

for ch, ax in enumerate(axes[:-1]): # for each channel

# positive clusters

_, c1, p1, _ = pcluster_test(tfr_ev.data[:, ch], tail=1, **kwargs)

# negative clusters

_, c2, p2, _ = pcluster_test(tfr_ev.data[:, ch], tail=-1, **kwargs)

# note that we keep clusters with p <= 0.05 from the combined clusters

# of two independent tests; in this example, we do not correct for

# these two comparisons

c = np.stack(c1 + c2, axis=2) # combined clusters

p = np.concatenate((p1, p2)) # combined p-values

mask = c[..., p <= 0.05].any(axis=-1)

# plot TFR (ERDS map with masking)

tfr_ev.average().plot([ch], cmap="RdBu", cnorm=cnorm, axes=ax,

colorbar=False, show=False, mask=mask,

mask_style="mask")

ax.set_title(epochs.ch_names[ch], fontsize=10)

ax.axvline(0, linewidth=1, color="black", linestyle=":") # event

if ch != 0:

ax.set_ylabel("")

ax.set_yticklabels("")

fig.colorbar(axes[0].images[-1], cax=axes[-1]).ax.set_yscale("linear")

fig.suptitle(f"ERDS ({event})")

plt.show()

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.3s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.5s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.7s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.7s finished

Not setting metadata

Applying baseline correction (mode: percent)

Using a threshold of 1.724718

stat_fun(H1): min=-8.552076 max=3.183231

Running initial clustering …

Found 80 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

7%|7 | Permuting : 7/99 [00:00<00:00, 201.76it/s]

15%|#5 | Permuting : 15/99 [00:00<00:00, 218.82it/s]

24%|##4 | Permuting : 24/99 [00:00<00:00, 235.35it/s]

32%|###2 | Permuting : 32/99 [00:00<00:00, 235.58it/s]

42%|####2 | Permuting : 42/99 [00:00<00:00, 248.73it/s]

52%|#####1 | Permuting : 51/99 [00:00<00:00, 251.87it/s]

62%|######1 | Permuting : 61/99 [00:00<00:00, 259.00it/s]

71%|####### | Permuting : 70/99 [00:00<00:00, 260.03it/s]

80%|#######9 | Permuting : 79/99 [00:00<00:00, 260.81it/s]

88%|########7 | Permuting : 87/99 [00:00<00:00, 257.79it/s]

96%|#########5| Permuting : 95/99 [00:00<00:00, 255.27it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 253.80it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 252.91it/s]

Step-down-in-jumps iteration #1 found 0 clusters to exclude from subsequent iterations

Using a threshold of -1.724718

stat_fun(H1): min=-8.552076 max=3.183231

Running initial clustering …

Found 67 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

8%|8 | Permuting : 8/99 [00:00<00:00, 235.73it/s]

15%|#5 | Permuting : 15/99 [00:00<00:00, 220.83it/s]

24%|##4 | Permuting : 24/99 [00:00<00:00, 236.74it/s]

33%|###3 | Permuting : 33/99 [00:00<00:00, 244.27it/s]

42%|####2 | Permuting : 42/99 [00:00<00:00, 248.90it/s]

52%|#####1 | Permuting : 51/99 [00:00<00:00, 252.15it/s]

60%|#####9 | Permuting : 59/99 [00:00<00:00, 249.51it/s]

70%|######9 | Permuting : 69/99 [00:00<00:00, 256.37it/s]

78%|#######7 | Permuting : 77/99 [00:00<00:00, 253.70it/s]

87%|########6 | Permuting : 86/99 [00:00<00:00, 255.22it/s]

95%|#########4| Permuting : 94/99 [00:00<00:00, 253.05it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 253.10it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 251.97it/s]

Step-down-in-jumps iteration #1 found 1 cluster to exclude from subsequent iterations

0%| | Permuting : 0/99 [00:00<?, ?it/s]

8%|8 | Permuting : 8/99 [00:00<00:00, 235.83it/s]

17%|#7 | Permuting : 17/99 [00:00<00:00, 251.20it/s]

26%|##6 | Permuting : 26/99 [00:00<00:00, 256.46it/s]

36%|###6 | Permuting : 36/99 [00:00<00:00, 267.12it/s]

45%|####5 | Permuting : 45/99 [00:00<00:00, 266.94it/s]

55%|#####4 | Permuting : 54/99 [00:00<00:00, 266.78it/s]

64%|######3 | Permuting : 63/99 [00:00<00:00, 266.70it/s]

74%|#######3 | Permuting : 73/99 [00:00<00:00, 270.86it/s]

84%|########3 | Permuting : 83/99 [00:00<00:00, 274.27it/s]

94%|#########3| Permuting : 93/99 [00:00<00:00, 276.97it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 277.01it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 274.74it/s]

Step-down-in-jumps iteration #2 found 0 additional clusters to exclude from subsequent iterations

No baseline correction applied

Using a threshold of 1.724718

stat_fun(H1): min=-4.528367 max=3.706422

Running initial clustering …

Found 88 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

5%|5 | Permuting : 5/99 [00:00<00:00, 146.26it/s]

10%|# | Permuting : 10/99 [00:00<00:00, 146.87it/s]

19%|#9 | Permuting : 19/99 [00:00<00:00, 188.53it/s]

29%|##9 | Permuting : 29/99 [00:00<00:00, 217.39it/s]

39%|###9 | Permuting : 39/99 [00:00<00:00, 234.71it/s]

49%|####9 | Permuting : 49/99 [00:00<00:00, 246.24it/s]

59%|#####8 | Permuting : 58/99 [00:00<00:00, 249.38it/s]

66%|######5 | Permuting : 65/99 [00:00<00:00, 242.93it/s]

74%|#######3 | Permuting : 73/99 [00:00<00:00, 242.02it/s]

82%|########1 | Permuting : 81/99 [00:00<00:00, 241.27it/s]

90%|########9 | Permuting : 89/99 [00:00<00:00, 240.67it/s]

99%|#########8| Permuting : 98/99 [00:00<00:00, 243.42it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 242.17it/s]

Step-down-in-jumps iteration #1 found 1 cluster to exclude from subsequent iterations

0%| | Permuting : 0/99 [00:00<?, ?it/s]

9%|9 | Permuting : 9/99 [00:00<00:00, 264.65it/s]

17%|#7 | Permuting : 17/99 [00:00<00:00, 249.38it/s]

27%|##7 | Permuting : 27/99 [00:00<00:00, 265.36it/s]

36%|###6 | Permuting : 36/99 [00:00<00:00, 265.60it/s]

44%|####4 | Permuting : 44/99 [00:00<00:00, 259.22it/s]

54%|#####3 | Permuting : 53/99 [00:00<00:00, 260.54it/s]

64%|######3 | Permuting : 63/99 [00:00<00:00, 266.39it/s]

73%|#######2 | Permuting : 72/99 [00:00<00:00, 266.23it/s]

82%|########1 | Permuting : 81/99 [00:00<00:00, 266.14it/s]

92%|#########1| Permuting : 91/99 [00:00<00:00, 269.66it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 273.10it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 270.89it/s]

Step-down-in-jumps iteration #2 found 0 additional clusters to exclude from subsequent iterations

Using a threshold of -1.724718

stat_fun(H1): min=-4.528367 max=3.706422

Running initial clustering …

Found 58 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

8%|8 | Permuting : 8/99 [00:00<00:00, 235.26it/s]

16%|#6 | Permuting : 16/99 [00:00<00:00, 235.62it/s]

23%|##3 | Permuting : 23/99 [00:00<00:00, 225.21it/s]

30%|### | Permuting : 30/99 [00:00<00:00, 220.18it/s]

38%|###8 | Permuting : 38/99 [00:00<00:00, 223.68it/s]

45%|####5 | Permuting : 45/99 [00:00<00:00, 220.60it/s]

52%|#####1 | Permuting : 51/99 [00:00<00:00, 213.49it/s]

59%|#####8 | Permuting : 58/99 [00:00<00:00, 212.51it/s]

67%|######6 | Permuting : 66/99 [00:00<00:00, 215.77it/s]

75%|#######4 | Permuting : 74/99 [00:00<00:00, 218.20it/s]

85%|########4 | Permuting : 84/99 [00:00<00:00, 227.15it/s]

93%|#########2| Permuting : 92/99 [00:00<00:00, 228.14it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 226.11it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 224.58it/s]

Step-down-in-jumps iteration #1 found 0 clusters to exclude from subsequent iterations

No baseline correction applied

Using a threshold of 1.724718

stat_fun(H1): min=-6.581589 max=3.346448

Running initial clustering …

Found 67 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

6%|6 | Permuting : 6/99 [00:00<00:00, 176.58it/s]

13%|#3 | Permuting : 13/99 [00:00<00:00, 192.07it/s]

20%|## | Permuting : 20/99 [00:00<00:00, 197.16it/s]

27%|##7 | Permuting : 27/99 [00:00<00:00, 199.57it/s]

34%|###4 | Permuting : 34/99 [00:00<00:00, 200.80it/s]

41%|####1 | Permuting : 41/99 [00:00<00:00, 201.84it/s]

48%|####8 | Permuting : 48/99 [00:00<00:00, 202.71it/s]

56%|#####5 | Permuting : 55/99 [00:00<00:00, 203.38it/s]

63%|######2 | Permuting : 62/99 [00:00<00:00, 203.81it/s]

70%|######9 | Permuting : 69/99 [00:00<00:00, 204.05it/s]

77%|#######6 | Permuting : 76/99 [00:00<00:00, 204.36it/s]

84%|########3 | Permuting : 83/99 [00:00<00:00, 204.63it/s]

92%|#########1| Permuting : 91/99 [00:00<00:00, 207.73it/s]

98%|#########7| Permuting : 97/99 [00:00<00:00, 204.79it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 205.24it/s]

Step-down-in-jumps iteration #1 found 0 clusters to exclude from subsequent iterations

Using a threshold of -1.724718

stat_fun(H1): min=-6.581589 max=3.346448

Running initial clustering …

Found 69 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

6%|6 | Permuting : 6/99 [00:00<00:00, 176.18it/s]

14%|#4 | Permuting : 14/99 [00:00<00:00, 206.96it/s]

21%|##1 | Permuting : 21/99 [00:00<00:00, 206.82it/s]

28%|##8 | Permuting : 28/99 [00:00<00:00, 206.78it/s]

35%|###5 | Permuting : 35/99 [00:00<00:00, 206.73it/s]

41%|####1 | Permuting : 41/99 [00:00<00:00, 201.06it/s]

49%|####9 | Permuting : 49/99 [00:00<00:00, 206.83it/s]

59%|#####8 | Permuting : 58/99 [00:00<00:00, 215.66it/s]

69%|######8 | Permuting : 68/99 [00:00<00:00, 226.51it/s]

76%|#######5 | Permuting : 75/99 [00:00<00:00, 224.12it/s]

83%|########2 | Permuting : 82/99 [00:00<00:00, 222.13it/s]

92%|#########1| Permuting : 91/99 [00:00<00:00, 226.86it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 234.00it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 229.15it/s]

Step-down-in-jumps iteration #1 found 0 clusters to exclude from subsequent iterations

No baseline correction applied

Using a threshold of 1.713872

stat_fun(H1): min=-3.754759 max=3.360704

Running initial clustering …

Found 71 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

9%|9 | Permuting : 9/99 [00:00<00:00, 265.42it/s]

18%|#8 | Permuting : 18/99 [00:00<00:00, 265.90it/s]

26%|##6 | Permuting : 26/99 [00:00<00:00, 255.46it/s]

32%|###2 | Permuting : 32/99 [00:00<00:00, 234.47it/s]

38%|###8 | Permuting : 38/99 [00:00<00:00, 221.80it/s]

44%|####4 | Permuting : 44/99 [00:00<00:00, 213.39it/s]

53%|#####2 | Permuting : 52/99 [00:00<00:00, 217.17it/s]

60%|#####9 | Permuting : 59/99 [00:00<00:00, 215.62it/s]

69%|######8 | Permuting : 68/99 [00:00<00:00, 222.35it/s]

78%|#######7 | Permuting : 77/99 [00:00<00:00, 227.84it/s]

88%|########7 | Permuting : 87/99 [00:00<00:00, 235.73it/s]

97%|#########6| Permuting : 96/99 [00:00<00:00, 238.88it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 235.59it/s]

Step-down-in-jumps iteration #1 found 0 clusters to exclude from subsequent iterations

Using a threshold of -1.713872

stat_fun(H1): min=-3.754759 max=3.360704

Running initial clustering …

Found 80 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

8%|8 | Permuting : 8/99 [00:00<00:00, 235.49it/s]

15%|#5 | Permuting : 15/99 [00:00<00:00, 221.02it/s]

22%|##2 | Permuting : 22/99 [00:00<00:00, 216.04it/s]

30%|### | Permuting : 30/99 [00:00<00:00, 221.38it/s]

37%|###7 | Permuting : 37/99 [00:00<00:00, 218.19it/s]

44%|####4 | Permuting : 44/99 [00:00<00:00, 215.91it/s]

52%|#####1 | Permuting : 51/99 [00:00<00:00, 214.48it/s]

58%|#####7 | Permuting : 57/99 [00:00<00:00, 208.87it/s]

66%|######5 | Permuting : 65/99 [00:00<00:00, 212.49it/s]

74%|#######3 | Permuting : 73/99 [00:00<00:00, 215.43it/s]

81%|######## | Permuting : 80/99 [00:00<00:00, 214.41it/s]

88%|########7 | Permuting : 87/99 [00:00<00:00, 213.60it/s]

96%|#########5| Permuting : 95/99 [00:00<00:00, 215.92it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 216.14it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 215.57it/s]

Step-down-in-jumps iteration #1 found 0 clusters to exclude from subsequent iterations

No baseline correction applied

Using a threshold of 1.713872

stat_fun(H1): min=-4.992503 max=5.416450

Running initial clustering …

Found 103 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

7%|7 | Permuting : 7/99 [00:00<00:00, 206.09it/s]

15%|#5 | Permuting : 15/99 [00:00<00:00, 221.84it/s]

22%|##2 | Permuting : 22/99 [00:00<00:00, 216.53it/s]

29%|##9 | Permuting : 29/99 [00:00<00:00, 213.77it/s]

36%|###6 | Permuting : 36/99 [00:00<00:00, 212.13it/s]

43%|####3 | Permuting : 43/99 [00:00<00:00, 211.05it/s]

51%|##### | Permuting : 50/99 [00:00<00:00, 210.19it/s]

58%|#####7 | Permuting : 57/99 [00:00<00:00, 209.45it/s]

65%|######4 | Permuting : 64/99 [00:00<00:00, 209.01it/s]

73%|#######2 | Permuting : 72/99 [00:00<00:00, 212.38it/s]

82%|########1 | Permuting : 81/99 [00:00<00:00, 218.52it/s]

90%|########9 | Permuting : 89/99 [00:00<00:00, 220.41it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 228.25it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 224.38it/s]

Step-down-in-jumps iteration #1 found 1 cluster to exclude from subsequent iterations

0%| | Permuting : 0/99 [00:00<?, ?it/s]

8%|8 | Permuting : 8/99 [00:00<00:00, 236.18it/s]

18%|#8 | Permuting : 18/99 [00:00<00:00, 266.66it/s]

27%|##7 | Permuting : 27/99 [00:00<00:00, 266.31it/s]

36%|###6 | Permuting : 36/99 [00:00<00:00, 266.34it/s]

45%|####5 | Permuting : 45/99 [00:00<00:00, 266.40it/s]

55%|#####4 | Permuting : 54/99 [00:00<00:00, 266.39it/s]

65%|######4 | Permuting : 64/99 [00:00<00:00, 271.20it/s]

74%|#######3 | Permuting : 73/99 [00:00<00:00, 270.39it/s]

81%|######## | Permuting : 80/99 [00:00<00:00, 261.75it/s]

90%|########9 | Permuting : 89/99 [00:00<00:00, 262.26it/s]

99%|#########8| Permuting : 98/99 [00:00<00:00, 262.68it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 265.46it/s]

Step-down-in-jumps iteration #2 found 0 additional clusters to exclude from subsequent iterations

Using a threshold of -1.713872

stat_fun(H1): min=-4.992503 max=5.416450

Running initial clustering …

Found 67 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

8%|8 | Permuting : 8/99 [00:00<00:00, 235.25it/s]

16%|#6 | Permuting : 16/99 [00:00<00:00, 235.96it/s]

26%|##6 | Permuting : 26/99 [00:00<00:00, 257.07it/s]

35%|###5 | Permuting : 35/99 [00:00<00:00, 259.64it/s]

42%|####2 | Permuting : 42/99 [00:00<00:00, 247.98it/s]

51%|##### | Permuting : 50/99 [00:00<00:00, 245.81it/s]

60%|#####9 | Permuting : 59/99 [00:00<00:00, 249.22it/s]

67%|######6 | Permuting : 66/99 [00:00<00:00, 242.85it/s]

73%|#######2 | Permuting : 72/99 [00:00<00:00, 234.05it/s]

80%|#######9 | Permuting : 79/99 [00:00<00:00, 230.72it/s]

87%|########6 | Permuting : 86/99 [00:00<00:00, 227.86it/s]

93%|#########2| Permuting : 92/99 [00:00<00:00, 222.30it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 220.92it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 224.88it/s]

Step-down-in-jumps iteration #1 found 0 clusters to exclude from subsequent iterations

No baseline correction applied

Using a threshold of 1.713872

stat_fun(H1): min=-6.044340 max=4.070444

Running initial clustering …

Found 92 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

8%|8 | Permuting : 8/99 [00:00<00:00, 235.36it/s]

16%|#6 | Permuting : 16/99 [00:00<00:00, 235.55it/s]

23%|##3 | Permuting : 23/99 [00:00<00:00, 225.19it/s]

31%|###1 | Permuting : 31/99 [00:00<00:00, 228.24it/s]

39%|###9 | Permuting : 39/99 [00:00<00:00, 229.95it/s]

48%|####8 | Permuting : 48/99 [00:00<00:00, 236.85it/s]

58%|#####7 | Permuting : 57/99 [00:00<00:00, 241.57it/s]

68%|######7 | Permuting : 67/99 [00:00<00:00, 249.33it/s]

75%|#######4 | Permuting : 74/99 [00:00<00:00, 243.38it/s]

83%|########2 | Permuting : 82/99 [00:00<00:00, 242.45it/s]

91%|######### | Permuting : 90/99 [00:00<00:00, 241.74it/s]

99%|#########8| Permuting : 98/99 [00:00<00:00, 241.03it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 239.88it/s]

Step-down-in-jumps iteration #1 found 1 cluster to exclude from subsequent iterations

0%| | Permuting : 0/99 [00:00<?, ?it/s]

6%|6 | Permuting : 6/99 [00:00<00:00, 176.51it/s]

14%|#4 | Permuting : 14/99 [00:00<00:00, 207.19it/s]

20%|## | Permuting : 20/99 [00:00<00:00, 196.83it/s]

27%|##7 | Permuting : 27/99 [00:00<00:00, 199.62it/s]

33%|###3 | Permuting : 33/99 [00:00<00:00, 194.59it/s]

41%|####1 | Permuting : 41/99 [00:00<00:00, 202.48it/s]

48%|####8 | Permuting : 48/99 [00:00<00:00, 203.29it/s]

56%|#####5 | Permuting : 55/99 [00:00<00:00, 203.83it/s]

62%|######1 | Permuting : 61/99 [00:00<00:00, 200.19it/s]

69%|######8 | Permuting : 68/99 [00:00<00:00, 201.01it/s]

75%|#######4 | Permuting : 74/99 [00:00<00:00, 198.21it/s]

82%|########1 | Permuting : 81/99 [00:00<00:00, 199.14it/s]

89%|########8 | Permuting : 88/99 [00:00<00:00, 199.99it/s]

96%|#########5| Permuting : 95/99 [00:00<00:00, 200.66it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 200.64it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 200.23it/s]

Step-down-in-jumps iteration #2 found 0 additional clusters to exclude from subsequent iterations

Using a threshold of -1.713872

stat_fun(H1): min=-6.044340 max=4.070444

Running initial clustering …

Found 51 clusters

0%| | Permuting : 0/99 [00:00<?, ?it/s]

7%|7 | Permuting : 7/99 [00:00<00:00, 205.89it/s]

15%|#5 | Permuting : 15/99 [00:00<00:00, 221.61it/s]

24%|##4 | Permuting : 24/99 [00:00<00:00, 237.07it/s]

33%|###3 | Permuting : 33/99 [00:00<00:00, 244.71it/s]

42%|####2 | Permuting : 42/99 [00:00<00:00, 249.32it/s]

51%|##### | Permuting : 50/99 [00:00<00:00, 246.84it/s]

59%|#####8 | Permuting : 58/99 [00:00<00:00, 245.04it/s]

68%|######7 | Permuting : 67/99 [00:00<00:00, 248.21it/s]

76%|#######5 | Permuting : 75/99 [00:00<00:00, 246.57it/s]

83%|########2 | Permuting : 82/99 [00:00<00:00, 241.63it/s]

91%|######### | Permuting : 90/99 [00:00<00:00, 241.05it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 245.55it/s]

100%|##########| Permuting : 99/99 [00:00<00:00, 244.70it/s]

Step-down-in-jumps iteration #1 found 0 clusters to exclude from subsequent iterations

No baseline correction applied

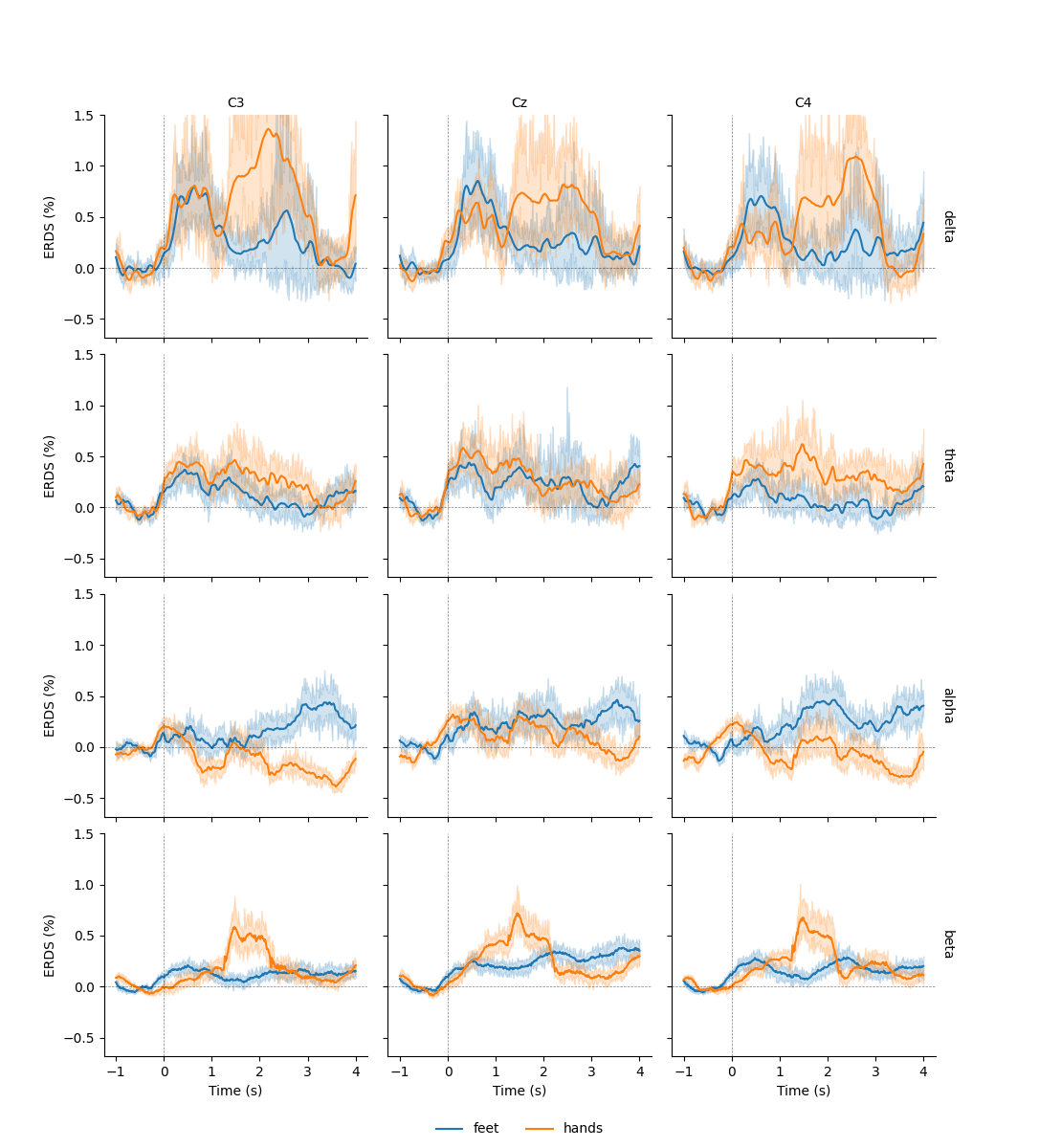

Similar to Epochs objects, we can also export data from

EpochsTFR and AverageTFR objects

to a Pandas DataFrame. By default, the time

column of the exported data frame is in milliseconds. Here, to be consistent

with the time-frequency plots, we want to keep it in seconds, which we can

achieve by setting time_format=None:

df = tfr.to_data_frame(time_format=None)

df.head()

This allows us to use additional plotting functions like

seaborn.lineplot() to plot confidence bands:

df = tfr.to_data_frame(time_format=None, long_format=True)

# Map to frequency bands:

freq_bounds = {'_': 0,

'delta': 3,

'theta': 7,

'alpha': 13,

'beta': 35,

'gamma': 140}

df['band'] = pd.cut(df['freq'], list(freq_bounds.values()),

labels=list(freq_bounds)[1:])

# Filter to retain only relevant frequency bands:

freq_bands_of_interest = ['delta', 'theta', 'alpha', 'beta']

df = df[df.band.isin(freq_bands_of_interest)]

df['band'] = df['band'].cat.remove_unused_categories()

# Order channels for plotting:

df['channel'] = df['channel'].cat.reorder_categories(('C3', 'Cz', 'C4'),

ordered=True)

g = sns.FacetGrid(df, row='band', col='channel', margin_titles=True)

g.map(sns.lineplot, 'time', 'value', 'condition', n_boot=10)

axline_kw = dict(color='black', linestyle='dashed', linewidth=0.5, alpha=0.5)

g.map(plt.axhline, y=0, **axline_kw)

g.map(plt.axvline, x=0, **axline_kw)

g.set(ylim=(None, 1.5))

g.set_axis_labels("Time (s)", "ERDS (%)")

g.set_titles(col_template="{col_name}", row_template="{row_name}")

g.add_legend(ncol=2, loc='lower center')

g.fig.subplots_adjust(left=0.1, right=0.9, top=0.9, bottom=0.08)

Converting "condition" to "category"...

Converting "epoch" to "category"...

Converting "channel" to "category"...

Converting "ch_type" to "category"...

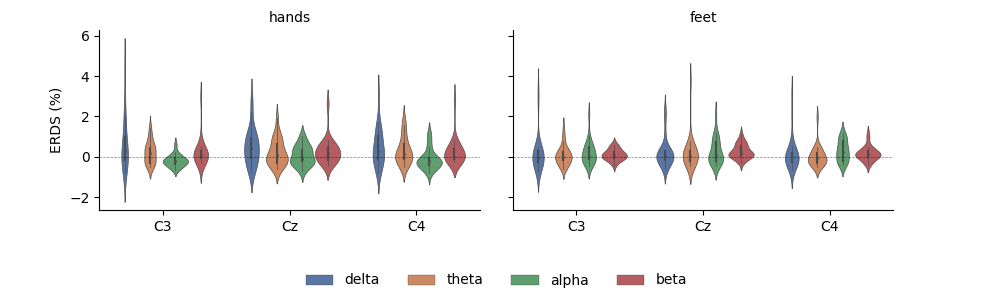

Having the data as a DataFrame also facilitates subsetting, grouping, and other transforms. Here, we use seaborn to plot the average ERDS in the motor imagery interval as a function of frequency band and imagery condition:

df_mean = (df.query('time > 1')

.groupby(['condition', 'epoch', 'band', 'channel'])[['value']]

.mean()

.reset_index())

g = sns.FacetGrid(df_mean, col='condition', col_order=['hands', 'feet'],

margin_titles=True)

g = (g.map(sns.violinplot, 'channel', 'value', 'band', n_boot=10,

palette='deep', order=['C3', 'Cz', 'C4'],

hue_order=freq_bands_of_interest,

linewidth=0.5).add_legend(ncol=4, loc='lower center'))

g.map(plt.axhline, **axline_kw)

g.set_axis_labels("", "ERDS (%)")

g.set_titles(col_template="{col_name}", row_template="{row_name}")

g.fig.subplots_adjust(left=0.1, right=0.9, top=0.9, bottom=0.3)

References#

Total running time of the script: ( 0 minutes 41.792 seconds)

Estimated memory usage: 120 MB