Note

Go to the end to download the full example code

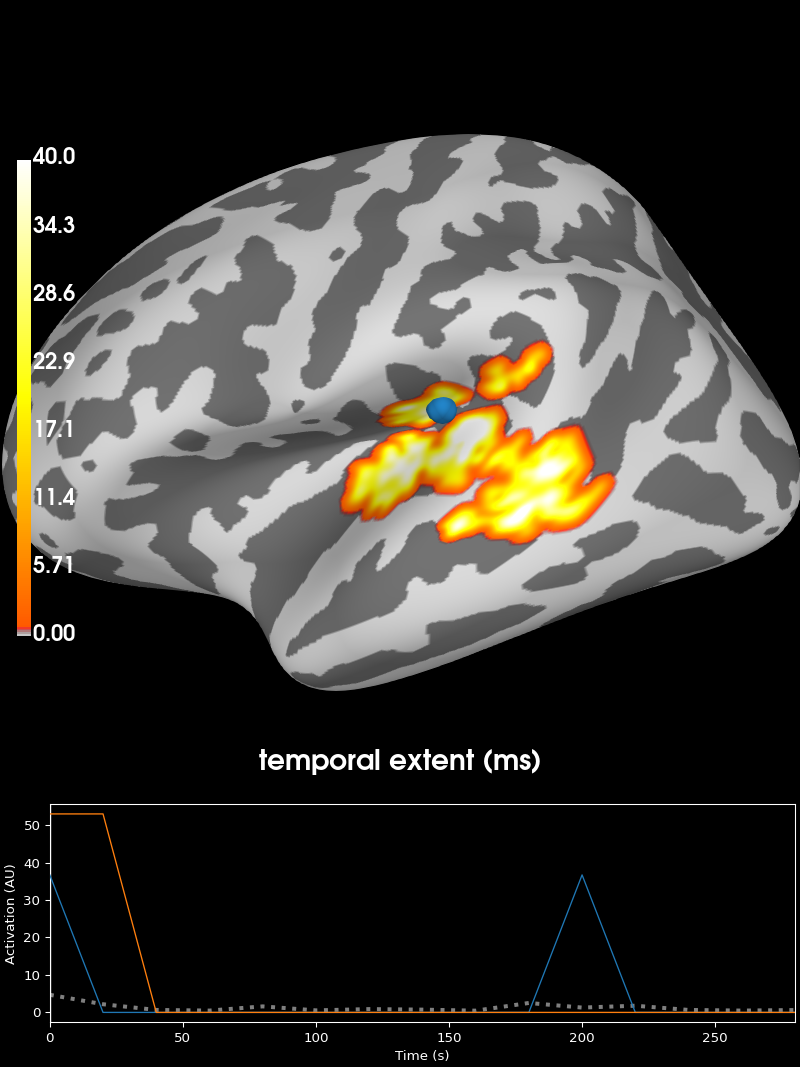

2 samples permutation test on source data with spatio-temporal clustering#

Tests if the source space data are significantly different between 2 groups of subjects (simulated here using one subject’s data). The multiple comparisons problem is addressed with a cluster-level permutation test across space and time.

# Authors: Alexandre Gramfort <alexandre.gramfort@inria.fr>

# Eric Larson <larson.eric.d@gmail.com>

# License: BSD-3-Clause

import numpy as np

from scipy import stats as stats

import mne

from mne import spatial_src_adjacency

from mne.stats import spatio_temporal_cluster_test, summarize_clusters_stc

from mne.datasets import sample

print(__doc__)

Set parameters#

data_path = sample.data_path()

meg_path = data_path / "MEG" / "sample"

stc_fname = meg_path / "sample_audvis-meg-lh.stc"

subjects_dir = data_path / "subjects"

src_fname = subjects_dir / "fsaverage" / "bem" / "fsaverage-ico-5-src.fif"

# Load stc to in common cortical space (fsaverage)

stc = mne.read_source_estimate(stc_fname)

stc.resample(50, npad="auto")

# Read the source space we are morphing to

src = mne.read_source_spaces(src_fname)

fsave_vertices = [s["vertno"] for s in src]

morph = mne.compute_source_morph(

stc,

"sample",

"fsaverage",

spacing=fsave_vertices,

smooth=20,

subjects_dir=subjects_dir,

)

stc = morph.apply(stc)

n_vertices_fsave, n_times = stc.data.shape

tstep = stc.tstep * 1000 # convert to milliseconds

n_subjects1, n_subjects2 = 6, 7

print("Simulating data for %d and %d subjects." % (n_subjects1, n_subjects2))

# Let's make sure our results replicate, so set the seed.

np.random.seed(0)

X1 = np.random.randn(n_vertices_fsave, n_times, n_subjects1) * 10

X2 = np.random.randn(n_vertices_fsave, n_times, n_subjects2) * 10

X1[:, :, :] += stc.data[:, :, np.newaxis]

# make the activity bigger for the second set of subjects

X2[:, :, :] += 3 * stc.data[:, :, np.newaxis]

# We want to compare the overall activity levels for each subject

X1 = np.abs(X1) # only magnitude

X2 = np.abs(X2) # only magnitude

Reading a source space...

[done]

Reading a source space...

[done]

2 source spaces read

surface source space present ...

Computing morph matrix...

Left-hemisphere map read.

Right-hemisphere map read.

20 smooth iterations done.

20 smooth iterations done.

[done]

[done]

Simulating data for 6 and 7 subjects.

Compute statistic#

To use an algorithm optimized for spatio-temporal clustering, we just pass the spatial adjacency matrix (instead of spatio-temporal)

print("Computing adjacency.")

adjacency = spatial_src_adjacency(src)

# Note that X needs to be a list of multi-dimensional array of shape

# samples (subjects_k) × time × space, so we permute dimensions

X1 = np.transpose(X1, [2, 1, 0])

X2 = np.transpose(X2, [2, 1, 0])

X = [X1, X2]

# Now let's actually do the clustering. This can take a long time...

# Here we set the threshold quite high to reduce computation,

# and use a very low number of permutations for the same reason.

n_permutations = 50

p_threshold = 0.001

f_threshold = stats.distributions.f.ppf(

1.0 - p_threshold / 2.0, n_subjects1 - 1, n_subjects2 - 1

)

print("Clustering.")

F_obs, clusters, cluster_p_values, H0 = clu = spatio_temporal_cluster_test(

X,

adjacency=adjacency,

n_jobs=None,

n_permutations=n_permutations,

threshold=f_threshold,

buffer_size=None,

)

# Now select the clusters that are sig. at p < 0.05 (note that this value

# is multiple-comparisons corrected).

good_cluster_inds = np.where(cluster_p_values < 0.05)[0]

Computing adjacency.

-- number of adjacent vertices : 20484

Clustering.

stat_fun(H1): min=0.000000 max=303.632172

Running initial clustering …

Found 361 clusters

0%| | Permuting : 0/49 [00:00<?, ?it/s]

2%|2 | Permuting : 1/49 [00:00<00:04, 9.86it/s]

4%|4 | Permuting : 2/49 [00:00<00:03, 14.96it/s]

6%|6 | Permuting : 3/49 [00:00<00:02, 18.10it/s]

8%|8 | Permuting : 4/49 [00:00<00:02, 20.20it/s]

12%|#2 | Permuting : 6/49 [00:00<00:01, 26.51it/s]

14%|#4 | Permuting : 7/49 [00:00<00:01, 26.94it/s]

16%|#6 | Permuting : 8/49 [00:00<00:01, 27.28it/s]

18%|#8 | Permuting : 9/49 [00:00<00:01, 27.54it/s]

22%|##2 | Permuting : 11/49 [00:00<00:01, 31.14it/s]

24%|##4 | Permuting : 12/49 [00:00<00:01, 30.96it/s]

27%|##6 | Permuting : 13/49 [00:00<00:01, 30.81it/s]

31%|### | Permuting : 15/49 [00:00<00:01, 33.54it/s]

33%|###2 | Permuting : 16/49 [00:00<00:00, 33.18it/s]

35%|###4 | Permuting : 17/49 [00:00<00:00, 32.86it/s]

37%|###6 | Permuting : 18/49 [00:00<00:00, 32.58it/s]

41%|#### | Permuting : 20/49 [00:00<00:00, 34.77it/s]

43%|####2 | Permuting : 21/49 [00:00<00:00, 34.35it/s]

45%|####4 | Permuting : 22/49 [00:00<00:00, 33.99it/s]

49%|####8 | Permuting : 24/49 [00:00<00:00, 35.88it/s]

51%|#####1 | Permuting : 25/49 [00:00<00:00, 35.41it/s]

53%|#####3 | Permuting : 26/49 [00:00<00:00, 34.98it/s]

57%|#####7 | Permuting : 28/49 [00:00<00:00, 36.67it/s]

59%|#####9 | Permuting : 29/49 [00:00<00:00, 36.17it/s]

61%|######1 | Permuting : 30/49 [00:00<00:00, 35.72it/s]

65%|######5 | Permuting : 32/49 [00:00<00:00, 37.28it/s]

67%|######7 | Permuting : 33/49 [00:00<00:00, 36.76it/s]

71%|#######1 | Permuting : 35/49 [00:00<00:00, 38.20it/s]

73%|#######3 | Permuting : 36/49 [00:01<00:00, 37.66it/s]

76%|#######5 | Permuting : 37/49 [00:01<00:00, 37.16it/s]

80%|#######9 | Permuting : 39/49 [00:01<00:00, 38.52it/s]

82%|########1 | Permuting : 40/49 [00:01<00:00, 37.97it/s]

84%|########3 | Permuting : 41/49 [00:01<00:00, 37.46it/s]

88%|########7 | Permuting : 43/49 [00:01<00:00, 38.76it/s]

90%|########9 | Permuting : 44/49 [00:01<00:00, 38.22it/s]

92%|#########1| Permuting : 45/49 [00:01<00:00, 37.71it/s]

96%|#########5| Permuting : 47/49 [00:01<00:00, 38.95it/s]

98%|#########7| Permuting : 48/49 [00:01<00:00, 38.40it/s]

100%|##########| Permuting : 49/49 [00:01<00:00, 39.01it/s]

100%|##########| Permuting : 49/49 [00:01<00:00, 36.61it/s]

Visualize the clusters#

print("Visualizing clusters.")

# Now let's build a convenient representation of each cluster, where each

# cluster becomes a "time point" in the SourceEstimate

fsave_vertices = [np.arange(10242), np.arange(10242)]

stc_all_cluster_vis = summarize_clusters_stc(

clu, tstep=tstep, vertices=fsave_vertices, subject="fsaverage"

)

# Let's actually plot the first "time point" in the SourceEstimate, which

# shows all the clusters, weighted by duration

# blue blobs are for condition A != condition B

brain = stc_all_cluster_vis.plot(

"fsaverage",

hemi="both",

views="lateral",

subjects_dir=subjects_dir,

time_label="temporal extent (ms)",

clim=dict(kind="value", lims=[0, 1, 40]),

)

Visualizing clusters.

Total running time of the script: ( 0 minutes 19.064 seconds)

Estimated memory usage: 243 MB